We have been talking about agreement lately (not sure what I am talking about? See the start of the series here) and we covered many terms that seem similar. Help!

Before you call the whole thing off and start dancing on roller skates like Fred Astaire and Ginger Roberts did in Shall We Dance, let’s clarify a little the difference between agreement and reliability.

When assessing agreement in medical research, we are often interested in one of three things:

1- comparing methods – à la Bland and Altman style.

2- validating an assay or analytical method.

3- assessing bioequivalence.

Agreement represents the degree of closeness between readings. We get that. Now reliability on the other hand actually assesses the degree of differentiation between subjects – so one’s ability to tell subjects apart from within a population. Yes, I realize this is a subtlety just as Ella Fitzgerald and Louis Armstrong sing about in the original Let’s Call the Whole Thing Off.

Now, often when assessing agreement one will use an unscaled index (ie a continuous measure for which you calculate the Mean Squared Deviation, Repeatability Standard Deviation, Reproducibility Standard Deviation, or the Bland and Altman Limits of Agreement) whereas when assessing reliability one often uses a scaled index (ie a measure for which you can calculate the Intraclass Correlation Coefficient or Concordance Correlation Coefficient). This is because a scaled index mostly depends on between-subject variability and, therefore, allows for the differentiation of subjects from a population.

Ok – clear as mud. Here are some very basic guidelines:

1- Use descriptive stats to start with.

2- Follow it up with an unscaled index measure like the MSD or LOI which deal with absolute values (like the difference).

3- Finish up with a scaled index measure that will yield a standardized value between -1 and +1 (like the ICC or CCC).

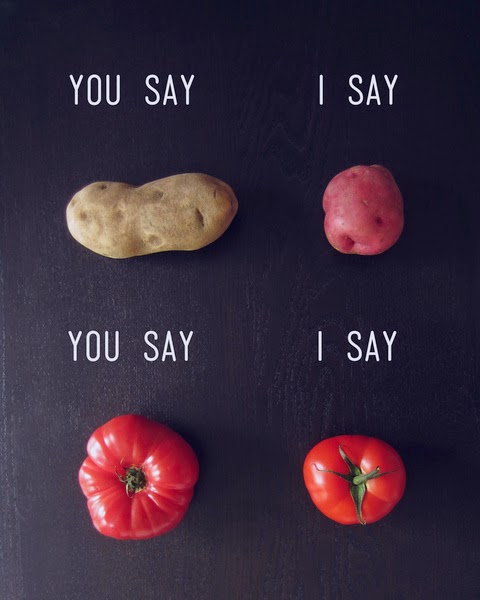

Potato, Potahtoe. Whatever.

Entertain yourself with this humorous clib from the Secret Policeman’s Ball and I’ll…

See you in the blogosphere!

Pascal Tyrrell