Hi! I’m Yan Qing Lee, an incoming 3rd-year Computer Science and Psychology double major undergraduate student. This past summer, I was given the opportunity to embark on my first research project in the field of artificial intelligence, and I’m excited to share my experience.

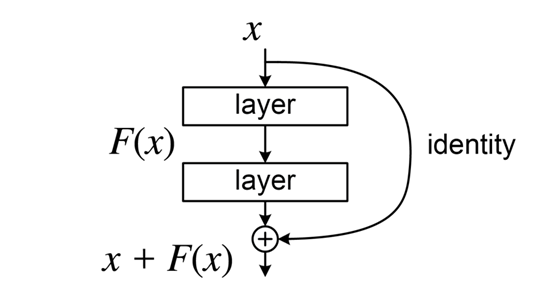

My research topic investigated if individuals who receive a false-positive mammogram result by an AI model have a higher risk of receiving a breast cancer diagnosis later on. Past studies have found that receiving a false-positive mammogram result from radiologists is associated with a higher risk of future breast cancer, but no studies have yet investigated if this holds true for AI breast cancer detection models. In this project, I used a longitudinal dataset of breast cancer mammograms, and ran a trained AI breast cancer classifier, made of an ensemble of 4 Convnext-small models, to obtain false-positive and true-negative results. Cox proportional hazards models were then used to investigate the hazard ratio of receiving a false-positive result, from both the AI model, and from radiologists.

As a student who entered the Computer Science major out-of-stream, I started the ROP feeling really out of place. Although I’ve known I wanted to pursue AI, I had no real experience in neither AI nor medical imaging, and I wondered if I was too under-qualified for this experience. Still, I was determined to put in as many hours as I needed to succeed.

I first began by familiarizing myself with ML terms, and choosing an area of interest (breast cancer mammography) to formulate a research question upon. As I’m sure other ROP students would agree, this process was extremely challenging; as weeks passed by, I found that my research questions were always either over-ambitious or not feasible. Over time, however, I realized that my difficulty with creating a research question stemmed from my lack of knowledge in exactly how ML models work, and the existing literature and gaps within the field of breast cancer mammography. As I dug deeper into existing literature, the one interesting finding regarding radiologists’ false-positives caught my eye, and this finally led me to my research question.

Once I began working on my project, the many challenges of research revealed themselves to me. This included difficulties of downloading and parsing through a large dataset, of installing packages and working around incompatible versions of libraries to set up a working environment, and, worst of all, of finding out an AI breast cancer detection model you originally centered your project around is not as replicable as you assumed it would be. Despite that I made sure to set up my research question to be relatively simple, the process of setting up, debugging preprocessing code, training and running an AI breast cancer classification model and obtaining undesirable training results was nothing short of complicated. Still, with the weekly lab meetings keeping me on track, and the support of Dr. Tyrrell, Mauro and the other students in the lab, I slowly but surely overcame every obstacle, and learned immense amounts every week to successfully complete my project. Even though I had to find a new AI model to use near the end, and redo my experimentation, I found that with my experience with the previous AI model, I was now able to independently set up and run the new model much more efficiently than before. It was proof of how much I’d learned, and I’m glad to now be able to look back and be proud of how much I’ve accomplished in the span of a few months.

At the end of it all, I have to thank Dr. Tyrrell for fostering my passion towards AI and its applications in fields as impactful and important as breast cancer mammography. This experience only made me more excited to delve into the applications of AI in other fields in the future, and I can’t thank the MiData lab enough for this experience.