So, let’s say you have invited everyone over for the big game on Sunday (Superbowl 49) but you don’t have a big screen TV. Whoops! That sucks. Time to go shopping. Here’s the rub: which one to get? There are so many to chose from and only a little time to make the decision. Here is what you do:

1- call your best friends to help you out

2- make a list of all neighboring electronics stores

3- Go shopping!

OK, that sounds like a good plan but it will take an enormous amount of time to perform this task all together and more importantly your Lada only seats 4 comfortably and you are 8 buddies.

OK, that sounds like a good plan but it will take an enormous amount of time to perform this task all together and more importantly your Lada only seats 4 comfortably and you are 8 buddies.

As you are a new research scientist (see here for your story) and you have already studied the challenges of assessing agreement (see here for a refresher) you know that it is best for all raters to assess the same items of interest. This is called a fully crossed design. So in this case you and all of your friends will assess all the TVs of interest. You will then make a decision based on the ratings. Often, it is of interest to know and to quantify the degree of agreement between the raters – your friends in this case. This assessment is the inter-rater reliability (IRR).

As a quick recap,

Observed Scores = True Score + Measurement Error

And

Reliability = Var(True Score)/ Var(True Score) + Var(Measurement Error)

Fully crossed designs allow you to assess and control for any systematic bias between raters at the cost of an increase in the number of assessments made.

The problem today is that you want to minimize the number of assessments made in order to save time and keep your buddies happy. What to do? Well, you will simply perform a study where different items will be rated by different subsets of raters. This is a “not fully crossed” design!

However, you must be aware that with this type of design you are at risk of underestimating the true reliability and therefore must, therefore, perform alternative statistics.

I will not go into statistical detail (today anyway!) but if you are interested have a peek here. The purpose of today’s post was simply to bring to your attention that you need to be very careful when assessing agreement between raters when NOT performing a fully crossed design. The good news is that there is a way to estimate reliability when you are not able to have all raters assess all the same subjects.

Now you can have small groups of friends who can share the task of assessing TVs. This will result in less assessments, less time to complete the study, and – most importantly – less use of your precious Lada!

Your main concern, as you are the one to make the purchase of the TV, is still: can you trust your friends assessment score of TVs you did not see? But now you have a way to determine if you and your friends are on the same page!

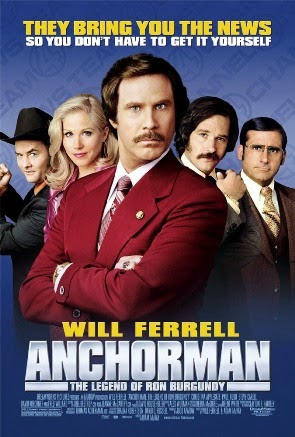

Maybe this will avoid you and your friends having to Agree to Disagree as did Will Ferrell in Anchorman…

Listen to an unreleased early song by Katy Perry Agree to Disagree, enjoy the Superbowl (and Katy Perry) on Sunday and…

…I’ll see you in the blogosphere!

Pascal Tyrrell

Website of Prof. Pascal Tyrrell