Wait… Lasso? Isn’t a lasso that lariat or loop-like rope that cowboys use? Or perhaps you may be thinking about that tool in Photoshop that’s used for selecting free-form segments!

Well… technically neither is wrong! However, in statistics and machine learning, Lasso stands for something completely different: least absolute shrinkage and selection operator. This term was coined by Dr. Robert Tibshirani in 1996 (who was a UofT professor at that time!).

Okay… that’s cool and all, but what the heck does that actually mean? And what does it do?

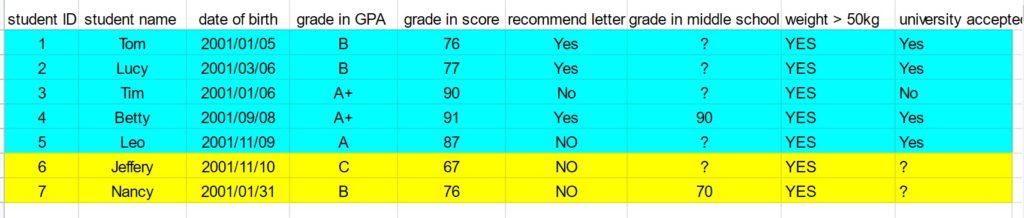

Lasso is a type of regression analysis method, meaning it tries to estimate the relationship between predictor variables and outcomes. It’s typically used to perform feature selection or regularization.

Regularization is a way of reducing overfitting of a model, ie. it removes some of the “noise” and randomness of the data. On the other hand, feature selection is a form of dimension reduction. Out of all the predictor variables in a dataset, it will select the few that contribute the most to the outcome variable to include in a predictive model.

Lasso works by applying a fixed upper bound to the sum of absolute values of the coefficient of the predictors in a model. To ensure that this sum is within the upper bound, the algorithm will shrink some of the coefficients, particularly it shrinks the coefficients of predictors that are less important to the outcome. The predictors whose coefficients are shrunk to zero are not included at all in the final predictive model.

Lasso has applications in a variety of different fields! It’s used in finance, economics, physics, mathematics, and if you haven’t guessed already… medical imaging! As the state-of-the-art feature selection technique, Lasso is used a lot in turning large radiomic datasets into easily interpretable predictive models that help researchers study, treat, and diagnose diseases.

Now onto the fun part, using Lasso in a sentence by the end of the day! (see rules here)

Serious: This predictive model I got using Lasso has amazing accuracy for detecting the presence of a tumour!

Less serious: I went to my professor’s office hours for some help on how to use Lasso, but out of nowhere he pulled out a rope!

See you in the blogosphere!

Jessica Xu