Last week I met with Helen, a clinical investigator program radiology resident from our department, about her research (shout out to Dr Laurent Milot’s research group). When discussing predictors and outcomes for her retrospective study it was suggested that some continuous variables be broken up into levels or categories based on given cut-points. This practice is often encountered in the world of medical research. The main reason? People in the medical community find it easier to understand results that are expressed as proportions, odds ratio, or relative risk. When working with continuous variables we end up talking about parameter estimates / beta weights and such – not as “reader friendly”.

Unfortunately, as Neil Sedaka sang about in his famous song Breaking Up Is Hard to Do, by breaking up continuous variables you pay a stiff penalty when it comes to your ability to describe the relationship that you are interested in and the sample size requirements (see loss of power) of your study.

You are now a newly minted research scientist (need a refresher? See Pocket Protector) and are interested in discovering relationships among variables or between predictors and outcomes. The more accurate your findings the better the description of the relationships and the better the interpretation/ conclusions you can make.The bottom line is that dichotomizing/ categorizing a continuous measure will result in loss of information. Essentially, the “signal” which is the information captured by your measure will be reduced by categorization and, therefore, when you perform a statistical test that compares this signal to the “noise” or error of the model (observed differences between your patients for example) you will find yourself at a disadvantage (loss of power). David Streiner (great author and great guy!) gives a more complete explanation in one of his papers.

Now, as we see in the funny movie with Vince Vaugh and Jennifer Aniston, The Break Up, there are times when categorization may make sense. For example when the variable you are considering is not normally distributed (see Are You My Type?) or when the relationship that you are studying is not linear. We will talk about these situations in a later post.

Don’t forget: you will get further ahead if you keep your variables as continuous data whenever possible.

See you in the blogosphere,

Pascal Tyrrell

You like potato and I like potahto… Let’s Call the Whole Thing Off!

We have been talking about agreement lately (not sure what I am talking about? See the start of the series here) and we covered many terms that seem similar. Help!

Before you call the whole thing off and start dancing on roller skates like Fred Astaire and Ginger Roberts did in Shall We Dance, let’s clarify a little the difference between agreement and reliability.

When assessing agreement in medical research, we are often interested in one of three things:

1- comparing methods – à la Bland and Altman style.

2- validating an assay or analytical method.

3- assessing bioequivalence.

Agreement represents the degree of closeness between readings. We get that. Now reliability on the other hand actually assesses the degree of differentiation between subjects – so one’s ability to tell subjects apart from within a population. Yes, I realize this is a subtlety just as Ella Fitzgerald and Louis Armstrong sing about in the original Let’s Call the Whole Thing Off.

Now, often when assessing agreement one will use an unscaled index (ie a continuous measure for which you calculate the Mean Squared Deviation, Repeatability Standard Deviation, Reproducibility Standard Deviation, or the Bland and Altman Limits of Agreement) whereas when assessing reliability one often uses a scaled index (ie a measure for which you can calculate the Intraclass Correlation Coefficient or Concordance Correlation Coefficient). This is because a scaled index mostly depends on between-subject variability and, therefore, allows for the differentiation of subjects from a population.

Ok – clear as mud. Here are some very basic guidelines:

1- Use descriptive stats to start with.

2- Follow it up with an unscaled index measure like the MSD or LOI which deal with absolute values (like the difference).

3- Finish up with a scaled index measure that will yield a standardized value between -1 and +1 (like the ICC or CCC).

Potato, Potahtoe. Whatever.

Entertain yourself with this humorous clib from the Secret Policeman’s Ball and I’ll…

See you in the blogosphere!

Pascal Tyrrell

2 Legit 2 Quit

MC Hammer. Now those were interesting pants! Heard of the slang expression “Seems legit”? Well “legit” (short for legitimate) was popularized my MC Hammer’s song 2 Legit 2 Quit. I had blocked the memories of that video for many years. Painful – and no I never owned a pair of Hammer pants!

Whenever you sarcastically say “seems legit” you are suggesting that you question the validity of the finding. We have been talking about agreement lately and we have covered precision (see Repeat After Me), accuracy (see Men in Tights), and reliability (see Mr Reliable). Today let’s cover validity.

So, we have talked about how reliable a measure is under different circumstances and this helps us gauge its usefulness. However, do we know if what we are measuring is what we think it is. In other words, is it valid? Now reliability places an upper limit on validity – the higher the reliability, the higher the maximum possible validity. So random error will affect validity by reducing reliability whereas systematic error can directly affect validity – if there is a systematic shift of the new measurement from the reference or construct. When assessing validity we are interested in the proportion of the observed variance that reflects variance in the construct that the method was intended to measure.

***Too much stats alert*** Take a break and listen to Ice, Ice, Baby from the same era as MC Hammer and when you come back we will finish up with validity. Pants seem similar – agree? 🙂

OK, we’re back. The most challenging aspect of assessing validity is the terminology. There are several different types of validity dependent of the type of reference standard you decide to use (details to follow in later posts):

1- Content: the extent to which the measurement method assesses all the important content.

2- Construct: when measuring a hypothetical construct that may not be readily

observed.

3- Convergent: new measurement is correlated with other measurements of the same construct.

4- Discriminant: new measurement is not correlated with unrelated constructs.

So why do we assess validity? because we want to know the nature of what is being measured and the relationship of that measure to its scientific aim or purpose.

I’ll leave you with another “seem legit” picture that my kids would appreciate…

See you in the blogosphere,

Pascal Tyrrell

Mr Reliable

|

| Kevin Durant is Mr Reliable |

Being reliable is an important and sought after trait in life. Kevin Durant has proven himself to be just that to the NBA. Would you agree (pun intended)? So, we have been talking about agreement lately and we have covered precision (see Repeat After Me) and accuracy (see Men in Tights). Today let’s talk a little about reliability.

See you in the blogosphere,

Pascal Tyrrell

Men in Tights?

One of the first movies my parents took me to see was Disney’s Robin Hood in 1973. This was back in the days when movies were viewed in theaters and TV was still black and white for most people. One of Robin’s most redeeming qualities is his prowess as an archer. He simply never misses his target. Well maybe not so much in Mel Brook’s rendition of Robin Hood Men in Tights!

We have been talking about agreement lately and last time we covered precision (see Repeat After Me). We discussed that precision is most often associated with random error around the expected measure. So, now you are thinking: how about the possibility of systematic error? You are right. Let’s take Robin Hood as an example. If he were to loose 3 arrows at a target and all of them were to land in the bulls-eye then you would say that he has good precision – all arrows were grouped together – and good accuracy as all arrows landed in the same ring. Accuracy is a measure of “trueness”. The least amount of bias without knowing the true value. Now if all 3 arrows landed in the same ring but in different areas of the target he would have good accuracy – all 3 arrows receive the same points for being in the same ring – but poor precision as they are not grouped together.

As agreement is a measure of “closeness” between readings, it is not surprising then that it is a broader term that contains both accuracy and precision. You are interested in how much random error is affecting your ability to measure something AND whether or not there also exists a systematic shift in the values of your measure. The first results in an increased level of background noise (variability) and the latter in the shift of the mean of your measures away from the truth. Both important when considering overall agreement.

OK, take a break and watch Shrek Robin Hood. The first of a series is always the best…

Now the concepts of accuracy and precision originated in the physical sciences. Not to be outdone, the social sciences decided to define their own terms of agreement – validity and reliability. We will discuss these next time after you listen to Bryan Adams – Everything I Do from the Robin Hood soundtrack. Great tune.

See you in the blogosphere,

Pascal Tyrrell

Repeat After Me…

So, in my last post (Agreement Is Difficult) we started to talk about agreement which measures “closeness” between things. We saw that agreement is broadly defined by accuracy and precision. Today, I would like to talk a little more about the latter.

The Food and Drug Administration (FDA) defines precision as “the degree of scatter between a series of measurements obtained from multiple sampling of the same homogeneous sample under the prescribed conditions”. This means precision is only comparable under the same conditions and generally comes in two flavors:

1- Repeatability which measures the purest form of random error – not influenced by any other factors. The closeness of agreement between measures under the exact same conditions, where “same condition” means that nothing has changed other than the times of the measurements.

2- Reproducibility is similar to repeatability but represents the precision of a given method under all possible conditions on identical subjects over a short period of time. So, same test items but in different laboratories with different operators and using different equipment for example.

Now, when considering agreement if one of the readings you are collecting is an accepted reference then you are most probably interested in validity (we will talk about this a future post) which concerns the interpretation of your measurement. On the other hand if all of your readings are drawn from a common population then you are most likely interested in assessing the precision of the readings – including repeatability and reproducibility.

As we have just seen, not all repeats are the same! Think about what it is that you want to report before you set out to study agreement – or you could be destined to do it over again as does Tom Cruise in his latest movie Edge of Tomorrow where is lives, dies, and then repeats until he gets it right…

See you in the blogosphere,

Pascal Tyrrell

Agreement Is Difficult: So What Are We Gonna Do? I Dunno, What You Wanna Do?

It is never easy to come to an agreement – even amongst friends! The Vultures from Disney’s The Jungle Book (oldie but a goodie) certainly know this. In medical research measuring agreement is also a challenge. In this series of posts I am going to talk about agreement and how it is measured.

Agreement measures the “closeness” between things. It is a broad term that contains both “accuracy” and “precision”. So, let’s say you are shopping for screen protectors for your wonderful new phone. You head to the internet and start going through the gazillion links advertising screen protectors of all sizes and styles. As you just spent your savings on the phone, you do not have much money left over for the screen protector. You decide on a generic brand and order a pack of 10. After an unbearable wait of a week to receive them in the mail you open the pack and find that even though you ordered the screen protector to fit your specific phone they are a little small… except for two that fit perfectly! What? That’s annoying.

So, how close are the screen protectors to being “true” to the expected product? This is agreement. Now, most of them are a little small. This represents poor “accuracy”. This is because there exists a systematic bias. If you took the mean size of these 10 protectors you would find that it deviated from the true expected value – size in this case. Furthermore, you found that two of the 10 protectors actually fit your phone screen rather well. This is great, but this inconsistency between your protectors represents poor “precision”. This time we are interested in the degree of scatter between your measurements – a measure of within sample variation due to random error (see my Dickens post for more info).

Now the concepts of accuracy and precision originated in the physical sciences where direct measurements are possible. Not to be outdone, the social sciences (and then soon to adopt medical sciences!) decided to establish similar concepts – validity and reliability. We will discuss these in a latter post but for now simply remember that the main differences are that a reference is required for validity and that both validity and reliability are most often assessed with scaled indices.

Phew! That was a little confusing. Have a listen to We Just Disagree from Dave Mason to relax.

Next post we will look a little more closely at two special kinds of precision – repeatability and reproducibility.

See you in the blogoshere,

Pascal Tyrrell

It’s All Relevant According to Einstein… or Was It Relative?

One of Einstein‘s many theories is that a light beam always appears to have the same speed, no matter how fast you are moving relative to it. This theory is also one of the foundations of Einstein’s special theory of relativity. So why the Mini Einstein Bobble Head? Because of the Night at the Museum 2 movie, of course! Have a peek at the trailer and come back.

OK, the last letter in our F.I.N.E.R. mnemonic – a convenient way to remember what makes a good research question – is R. We covered E for Ethical last time and today we will go over R for Relevant – not relative (pay attention now!).

So, you are now a junior researcher with a newly minted pocked protector and have decided to step back a minute and assess your research question using F.I.N.E.R. Among the 5 characteristics we have discussed this last one is an important one. Let’s go back to F is for Feasible where we were thinking of a way to survey your friend’s about going camping at the end of the semester to celebrate the start of summer. The results of your survey will provide you with important information. Not only will they influence your decision to have the event or not, but they will also allow for promotion of the event as being “really popular” (important to many participants) and for better planning (important to the organizing committee). The results of the survey are “relevant”.

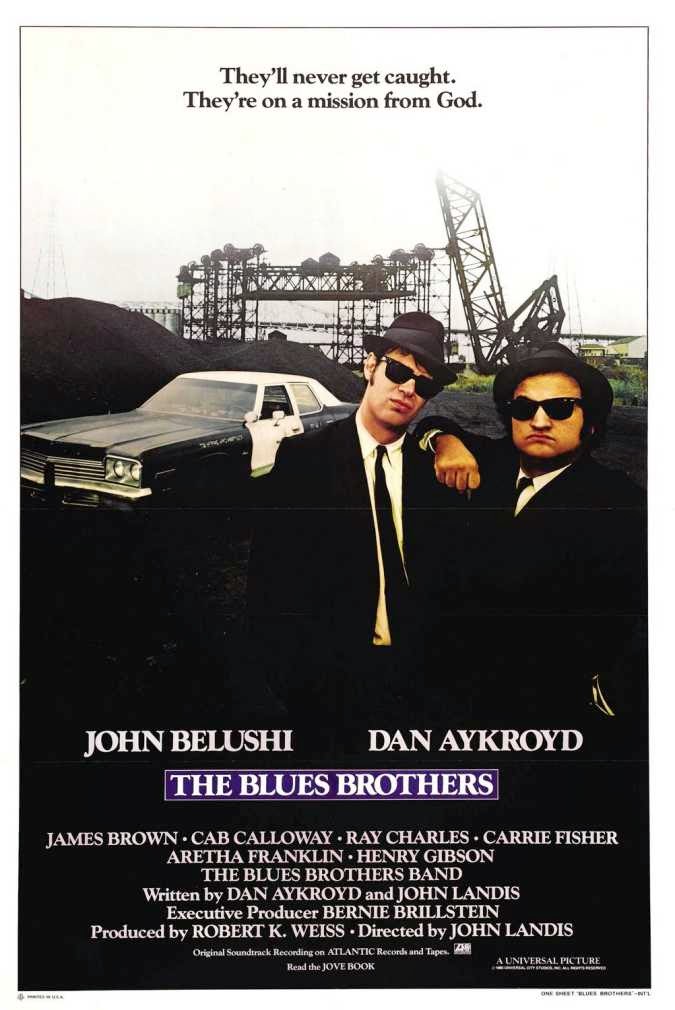

A Little Respect To Avoid The Blues… Brothers?

In her famous song Respect (have a listen while we chat), Aretha Franklin provides us with a perfect segue to the next letter in our F.I.N.E.R. mnemonic – a convenient way to remember what makes a good research question. We covered N for Novel last time and today we will go over E for Ethical.

Everyone has an idea of what they consider to be “right”. Ethics is a branch of philosophy that focuses on studying what is morally right and wrong in our society. Setting standards to live by that will be in everyone’s best interest. More importantly, ethics also involves the continuous effort of studying our own moral beliefs and our moral conduct, and striving to ensure that we live up to these standards that are reasonable and solidly-based.

Now The Blues Brothers may not have operated with completely ethical methods but you should when you perform research. Wondering what the Blues Brothers have to do with any of this? Watch the trailer and you will see Aretha singing “R-E-S-P-E-C-T”. She wants to be treated “right” just as your subjects will in your study.

But what if you are doing research on your own for fun and are not sure? Ask Mom, she’ll know.

New Gold Dream: Is It that Simple?

What a great album from Simple Minds. Ahhh, the 80’s. Their title track New Gold Dream should get you in the mood for the next letter in our F.I.N.E.R. mnemonic – a convenient way to remember what makes a good research question. We covered I for Interesting last time and today we will go over N for Novel.

No need to reinvent the wheel. You want to save your precious energy and time for answering a question that will move you forward in your area of science.

See you in the blogosphere,